Deploy fine-tuned LLMs without compromising on control

“Our ML engineers want to use Modal for everything. Modal helped reduce our VLM document parsing latency by 3x and allowed us to scale throughput to >100,000 pages per minute.”

“Modal lets us deploy new ML models in hours rather than weeks. We use it across spam detection, recommendations, audio transcription, and video pipelines, and it’s helped us move faster with far less complexity.”

Ship faster with Python-defined infrastructure

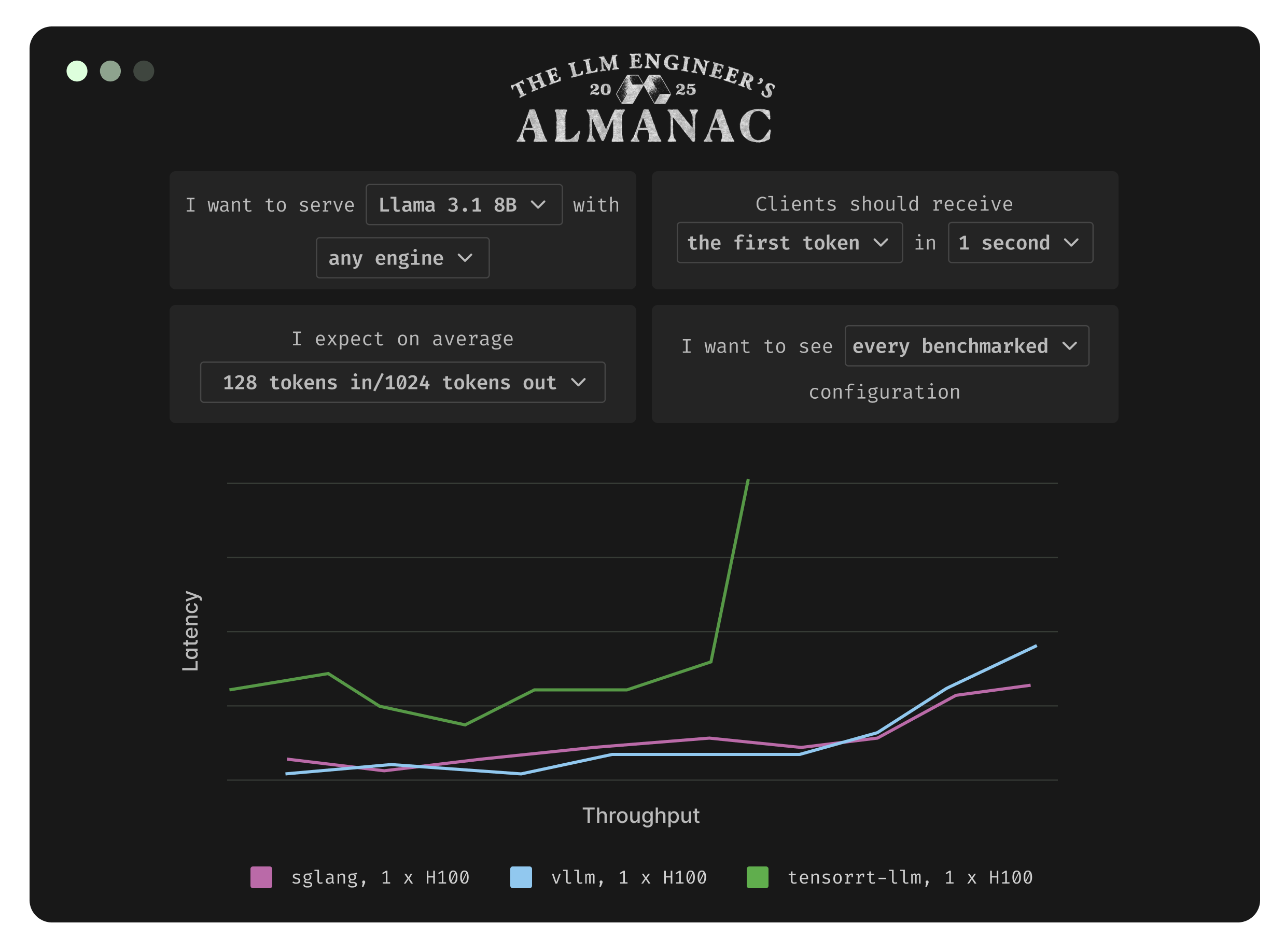

Inference optimizations that you control

Deploy any state-of-the-art or custom LLM using our flexible Python SDK.

Our in-house ML engineering team helps you implement inference optimizations specific to your workload.

You maintain full control of all code and deployments for instant iterations. No black boxes.

Autoscale to thousands of GPUs without reservations

Modal’s Rust-based container stack spins up GPUs in < 1s.

Modal autoscales up and down for max cost efficiency.

Modal’s proprietary cloud capacity orchestrator guarantees high GPU availability.

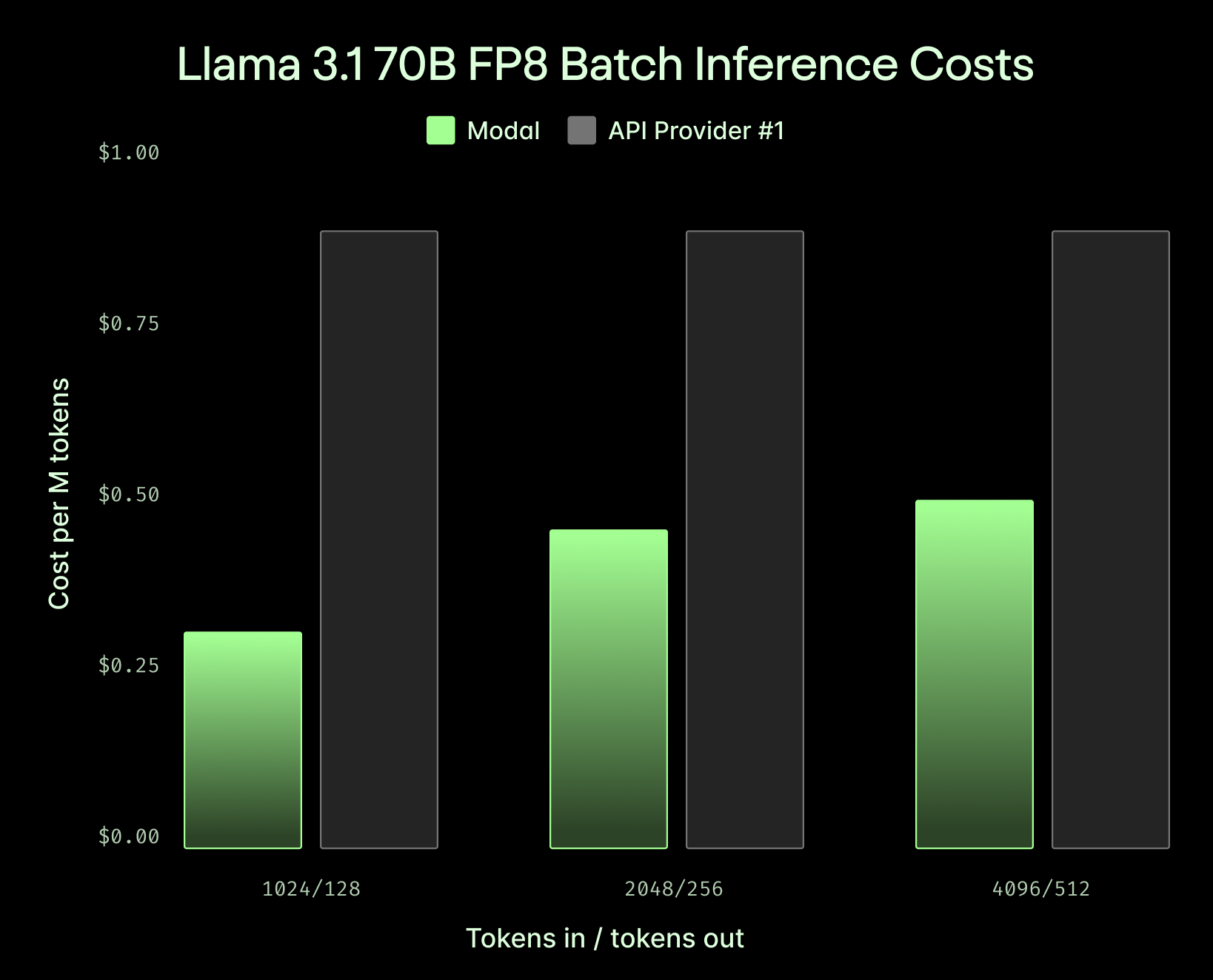

Unbeatable cost for batch inference

Save 50%+ on high-throughput, short-context tasks compared to API providers.

Sub-10ms network latency for online inference

Global GPU fleet runs close to your users, wherever they are. Support for inference optimizations like prefill disaggregation and prefix-aware routing.

Everything you need for production-grade deployments

Volumes

Load LLM weights quickly from any region.

Observability

Intuitive dashboards help you navigate the health of your deployments.

Enterprise-grade security

SOC2 and HIPAA compliance, zero data retention, and more.